|

|

|

|

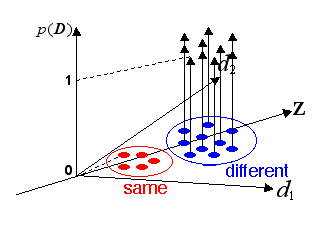

This research aims to judge whether a pair of similar images shows the objects in the same category or similar objects in different categories, and to estimate the confidence of this judgment statistically. In this research, various images of target objects are collected. In the example below, various image of leaves are collected. Then distances of image characteristics, for example colors and spacial frequency distributions, etc., are calculated for "pairs of images in the same category (in the example below, pairs of leaves from the same kind of tree)" and "pairs of images in different categories (in the example below, pairs of leaves from different kinds of trees)." The "pairs in the same category" are located with assigning value 0, and the "pairs of the different categories" are located with assigning value 1 in the feature space whose bases are the differences of image characteristics. Then the function that fits the pairs and values best are derived by the logistic regressive analysis. When a new pair of images is given, this pair is located in the above space of characteristics, and the value of the derived function is calculated. This value indicates the confidence of judgement that the images of this pair belong to different categories. The discrimination by the logistic regressive analysis is equivalent to the 2-layer feedforward neural network. Currently the improvement of the precision of judgement and the reduction of computational time are investigated by using combinations of this method and the 3-layer neural networks. The logistic discrimination is equivalent to the two-layer feed-forward neural network in the context of neural network. Although the statistical model used for the logistic discrimination is clear, it is not applicable if the border of discrimination is complicated, because of its linearity. On the other hand, although the three-layer neural network, an extention of the logistic discrimination is applicable to complicated problems, it is not clear what statistical model is employed, and the learning optimization requires a lot of computational time. We propose a hybrid method combining the logistic discrimination and the three-layer neural network, which discriminates simple cases using the logistic discrimination and complicated cases using the neural networks. This method reduces a lot of computational time while the discrimination accuracy is almost similar to the pure neural network. The degree of confidence based on the clear statistical model is obtained at all the cases except the discrimination near the boundary.

Selected publication

The following paper is a part of "multiorganic diagnosis using panoramic radiograph." This is an image binarization method based on statistical clustering, and a successor of the statistical object identification research on this page.

|

|

|